Realistic cloth shading is the subject of significant and ongoing computer graphics research. Many approaches use modeling processes to create a geometric representation of various types of fabric, from knitted yarns to woven threads. Other methods use volumetric representations of the woven pattern and/or require machine learning methods to capture and recreate the appearance of a given fabric. Although effective, these methods lack the ability to quickly change the weave pattern or other significant characteristics of the cloth’s rendered appearance without remodeling or regenerating the required data, or they require specialized rendering systems that are specifically designed to incorporate the evaluation of a machine learning network.

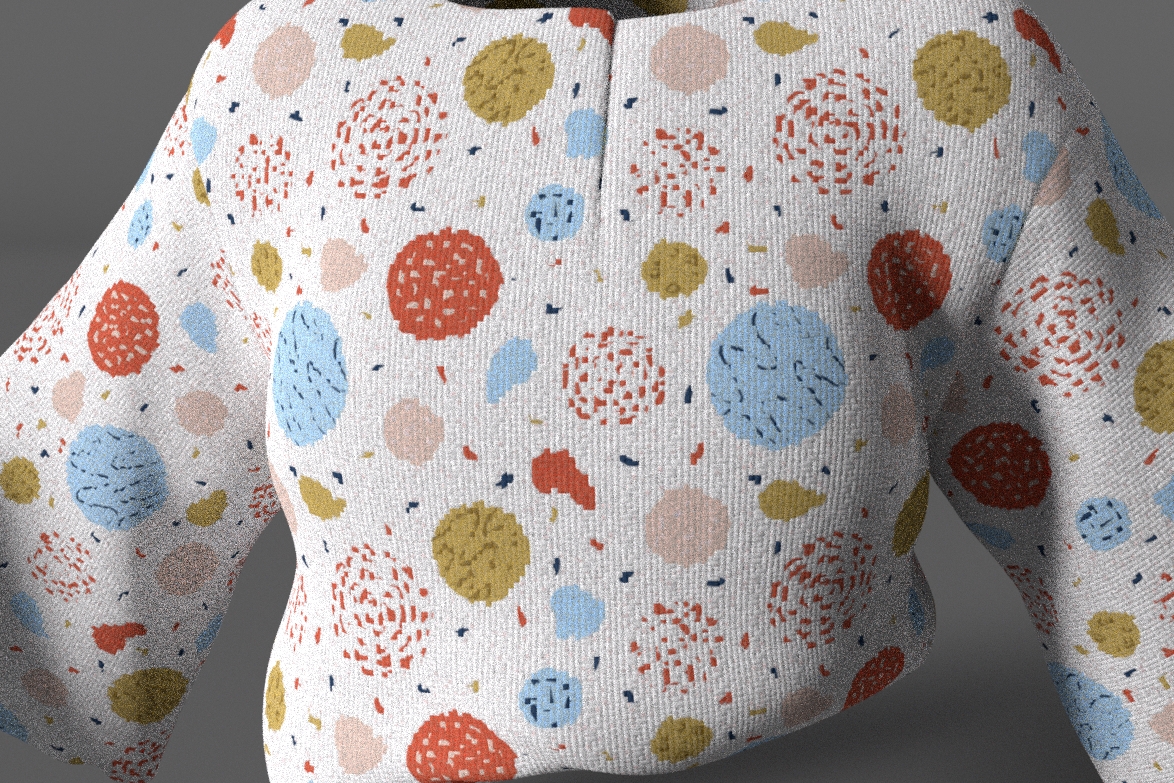

In this approach, a single pattern shader written in a common shading language and operating as a point process within a generic rendering system is capable of producing all the requisite signals to create a realistic woven cloth appearance.

This cloth pattern shading is then combined with cloth-specific BxDF response combinations. In this way, the visual characteristics of the cloth’s appearance can be interactively adjusted as needed right up until final rendering without the need for any remodeling or other data regeneration or analysis, or specialized rendering capabilities.